Bots (also knowns as spiders and crawlers) are software programs that perform automated, repetitive tasks. An example of that is the Google crawler that automatically scans websites by following links from one webpage to another. Because these bots are not real visitors, their behavior is not of interest to you.

Common practices refer to 2 categories of bots:

- Standard bots: Bots that are known in the industry, whether they “declare” themselves as bots or are global enough to be identified as such. These include search engine crawlers, social scrapers that fetch the images that are displayed when a web page is shared, and many more.

- Non-standard bots: Bots that are usually unique on a website. These can be bots that your development team built to perform a functional operation, a unique QA automation platform that performs actions on your site, or any unique bot that is not commonly known in the industry.

Filtering bots is important, because bots falsely inflate your data, adding noise that covers real insights, and might even skew your test results. Dynamic Yield automatically filters standard bots, and it is recommended that you filter non-standard bots as well, using Dynamic Yield processes.

How Dynamic Yield Automatically Filters Standard Bots

In Dynamic Yield, traffic from known bots and spiders is automatically excluded from all reports. The list of known bots is in sync with the bot traffic listed in the International IAB/ABC Spiders and Bots list, maintained by the Interactive Advertising Bureau (IAB). This list is standard across leading analytics platforms.

Note: If you use the server-side Experience APIs, you will need to pass the user-agent attribute within the API call (in the device object), in order for us to match the user agent with the known bots list, and determine if the “user” is real user or a bot.

How to filter non-standard bots

You can also filter non-standard bots, for example - a unique automation platform you have that performs routine actions on your site. You can do this in one of the following ways:

- Excluding their IP address, done in the General Settings screen.

- Excluding their user agents. with the user agent you want to filter.

Note that setting a filter does not impact historical data, and that filtering data is irreversible.

How to verify that a user agent is a non-standard bot

While there are different types of bots, many bots perform pageviews without really interacting with your site. This means that the bot is a user agent that usually contributes no revenue, does not add items to the cart, and does not make any purchases.

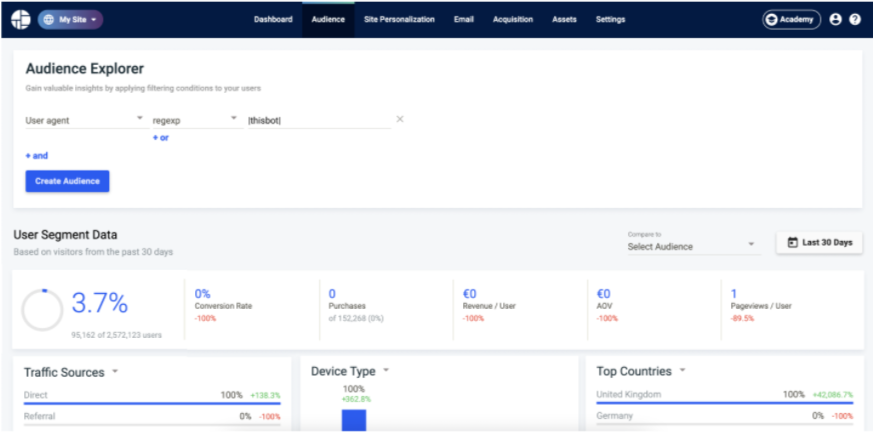

If you suspect that a user agent is a bot, click Audiences in the top navigation, and go to the Audience Explorer. Use a User-Agent condition, and paste into it the user agent you’re concerned about. A user agent that is a bot usually:

- Make 1 pageview per user.

- Does not do any purchase or add to cart events.

- Has a single traffic source, device type, and country.

How to determine whether you’re affected by bots

- Go to the Audience Explorer, and view a longer timeframe than 1 day.

- Compare the number of users, pageviews, add-to-cart events, and purchases to an analytics platform you are confident with.

- If numbers are similar between the 2 platforms: You are probably unaffected by bots.

- If numbers are different (for example, a 10% discrepancy), but the discrepancy exists in all 4 metrics: You are probably unaffected by bots. Learn more about troubleshooting data discrepancies.

- If numbers of users or pageviews are higher in Dynamic Yield, but the number of add-to-cart and purchase events is similar: You might be affected by unfiltered bots. Contact Support, and we can help you check whether there is actually a bot (and not an implementation issue), and if so, identify the user agent that should be filtered.